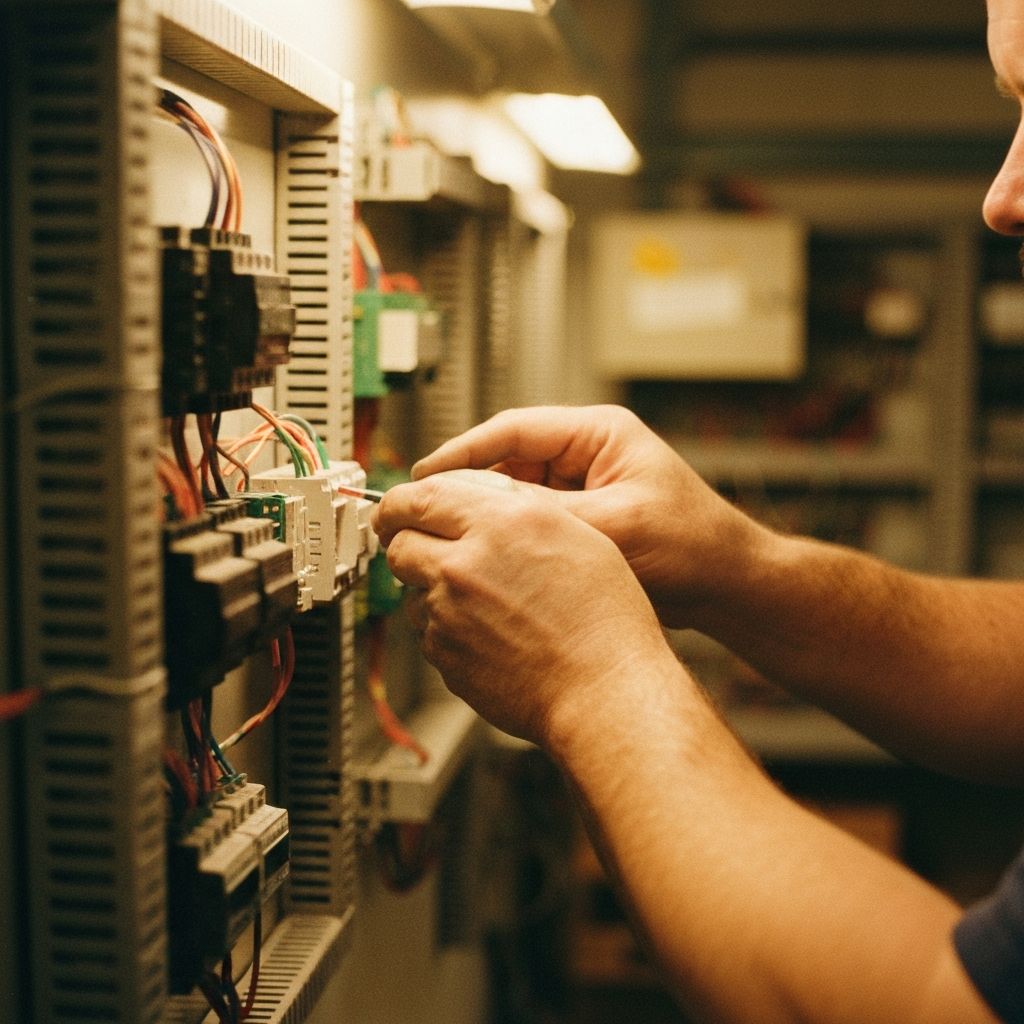

I've been thinking about electricians lately. When an electrician wires your house, their work gets inspected. They hold a licence. There's a certificate of compliance. If something goes wrong, there's a clear chain of accountability.

Software has never had any of that. And somehow, we've decided the answer to "AI is replacing developers" is to just let it rip.

AI Writes What You Ask For — Not What You Need

The risk isn't that AI-generated code is unreliable. The code is usually fine. The risk is that AI writes exactly what you ask for, and most people don't know what to ask for.

Tell Claude to build a journal entry system and it'll build you a good one. It'll ask smart questions about error handling, input validation and user roles. But it won't:

- Tell you that you need to prevent duplicate postings

- Enforce segregation of duties

- Think about your audit obligations or data retention requirements

- Ask "what happens when someone tries to post to a closed period?"

Because the AI asks good questions about some things, you assume it's considered all the things. It creates an illusion of rigour. You stop doing the hard thinking because it feels like someone already did it. Nobody did.

The Context Problem

Domain knowledge becomes more important, not less. The things that don't make it into the AI's context:

- Business rules that live in the heads of people who've spent years in a domain

- Edge cases that only surface after years of operating in a regulated environment

- Regulatory requirements specific to your industry or jurisdiction

- Institutional knowledge — the "we do it this way because in 2019 we got burned" stuff

None of that is in the training data. It has to come from you. I've spent 30+ years in tech, a lot of it in insurance and regulated industries. The systems that fail in production rarely fail because the code was bad. They fail because nobody specified what "right" actually looked like.

The Accountability Gap

A peer-reviewed Stanford study (Perry et al., ACM CCS 2023) found that developers using AI assistants wrote significantly less secure code than those working without AI — while being more confident they'd written secure code. We're not just checking less. We're more confident while we do it.

Electricians use better tools than they did 30 years ago. But they still need to understand the building code, the load calculations and what happens when something fails. The tools got better — the accountability didn't go away. Their work gets inspected before the power goes on, not after the house burns down.

In software, we're handing everyone faster tools, skipping the inspection and turning the power on.

When something goes wrong — and it will — the first question the insurer will ask is: "What was your process?" If the answer is "Claude seemed confident and it asked good questions," good luck with that claim.

So here's my question for anyone leading a tech team: who's accountable for yours?